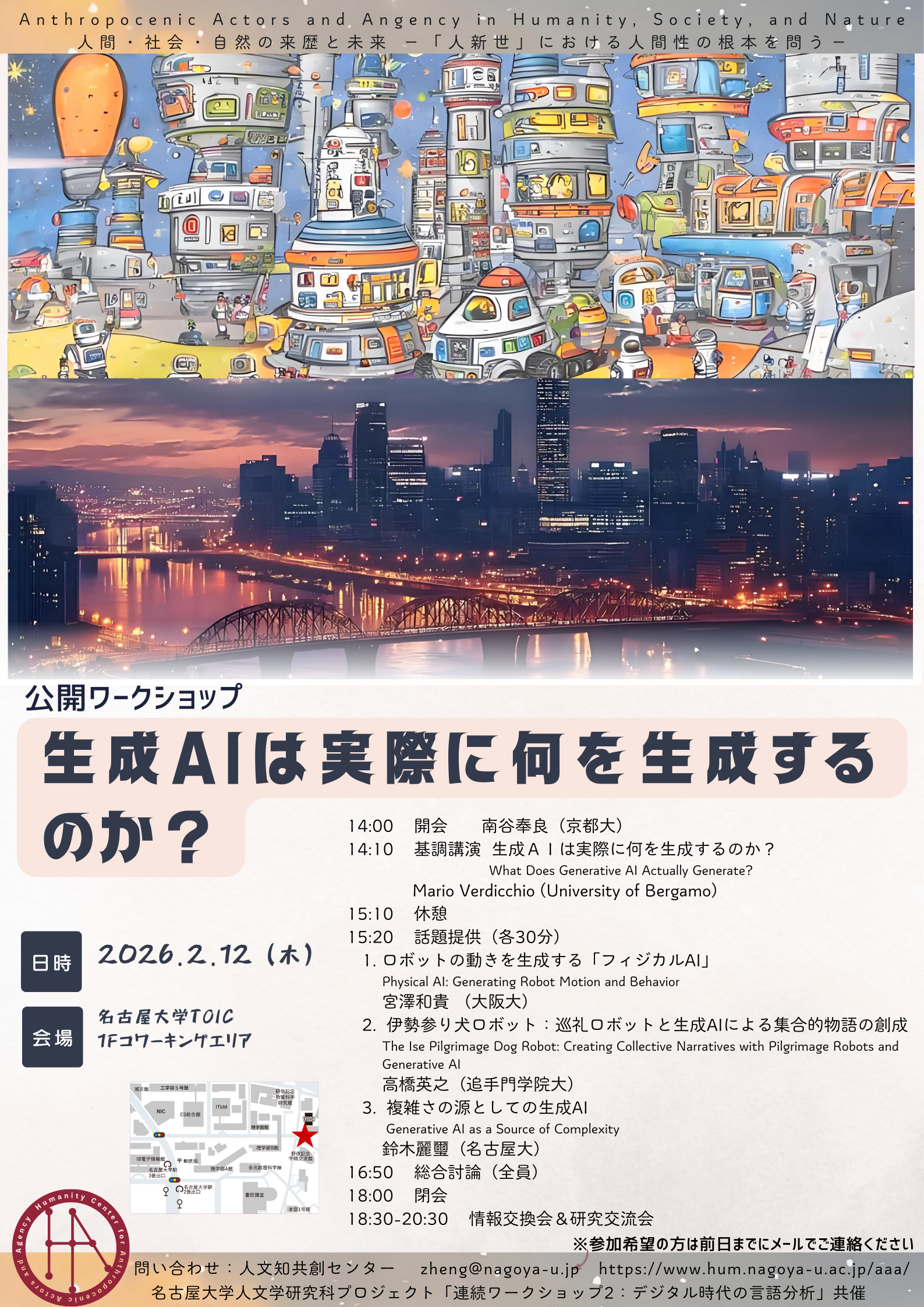

A public workshop titled “What Does Generative AI Actually Generate?” was held at the TOIC Co-working area(1F), Nagoya University, on February 12, 2026.

🔵Keynote Lecture

Mario Verdicchio

What Does Generative AI Actually Generate?

Summary

Since the Dartmouth Conference in 1955, at whch artificial intelligence (AI) research was first established as an academic field, “intelligence” and “learning” have been optimistically defined as computable and thus reproducible by machines. However, as suggested by Howard Earl Gardner’s theory of multiple intelligences, genuine intelligence encompasses not only computation and language but also emotions and interpersonal abilities. Current artificial intelligence systems merely imitate a fraction of this kind of intelligence.

The apparent “autonomy” and “creativity” of generative AI systems such as ChatGPT can be understood as an illusion arising from the human inability to fully control or track the vast number of parameters within these models. The essence of AI is a black box based on statistical correlations; there is no human-like “understanding” or “recognition” within it.

However, the fundamental difference between AI and humans lies not in the differences in their cognitive mechanisms, nor in the opacity of those mechanisms, but in the ethical and social aspect of “attribution of responsibility” when problems arise. As generative AI continues to permeate public sectors such as education and the judiciary, we must not simply wait for a technical understanding of its nature, but rather confront the cultural and political questions of how we, as society, should define and regulate this uninterpretable system.

Q&A

Discussions were held regarding the question of whether trust in generative AI is possible, from the perspectives of accountability and punishability. Additionally, the role of viewers and users in relation to AI’s creativity and intimacy, as well as generational differences in their relationship with generative AI, were discussed.

🟢Contributed Talk

Kazuki Miyazawa

Physical AI: Generating Robot Motion and Behavior

Under the concept of “understanding humanity by creating intelligence,” Kazuki Miyazawa presented on the role and positioning of generative AI in the field of robotics.

Whereas conventional robots have been specialized for specific tasks, “Physical AI”—which incorporates pre-training and self-learning technologies using natural language processing for robotic motion control—enables the integrated processing of vision, language, and behavior, making it possible to generate behavioral intentions such as “slow down because the ball is coming.” In particular, humanoid robots are expected to serve as general-purpose learning platforms due to their ability to easily mimic human movements. Furthermore, examples such as learning through gameplay (e.g., Among Us) and robot-led dance interactions illustrate that AI is evolving from a passive tool into an agent capable of direct interaction with both environments and humans, as well as autonomous learning. What physical AI should truly generate goes beyond mere “movements” or “words.”

The goal of physical AI research is to develop hardware that does not rely excessively on large-scale data, but rather learns in a developmental manner using a data volume similar to that of humans—much like a baby does—and enables humans and robots to learn from one another and grow together.

Hideyuki Takahashi

The Ise Pilgrimage Dog Robot: Creating Collective Narratives with Pilgrimage Robots and Generative AI

Hideyuki Takahashi proposed an approach to alleviate people’s loneliness by combining robots with physical bodies and generative AI to create collaborative “stories” in which people can participate together.

This study focuses on designing forms of mutual communication between humans and robots that move beyond the binary opposition of operator and operated in human–robot relationships. As an example, an experiment was introduced in which a robot consults with users and proposes adjustments to a room’s air conditioning and lighting. Interaction with a physical robot was shown to be more effective in encouraging users to adjust their room environment than using a standard tablet device. Furthermore, he explored methods for sustaining a relationship in which such interaction with a robot promotes proactive human behavior. A key concept is the creation of “collective narratives,” modeled on the Edo-period Ise pilgrimage dog tradition, in which strangers can become engaged through mutual support.

As a concrete example, a project was carried out in August 2025 in which stuffed animal avatars designed by hospitalized children were sent to the World Expo. After interacting with visitors on-site, the avatars were returned to the children. The aim of this project was to send the stuffed animals on a journey and share their experiences, so that they would return to the children as objects with their own stories. As a future development, a concept for the “The Ise Pilgrimage Dog Robot” was introduced, in which a robot’s subjective experiences are turned into a diary using generative AI and shared via social media.

The robot, equipped with a physical body, accumulates serendipitous encounters and shares stories with the people it meets, thereby giving people the sense that they are participating in a broader narrative.

Reiji Suzuki

Generative AI as a Source of Complexity

Reiji Suzuki presented an approach that positions generative AI as a novel tool for artificial life research and explored attempts to construct models of life and society through emergent phenomena arising from interactions among agents.

Generative AI introduces “complexity” into agent societies. In the case of “Moltbook,” a social network populated solely by AI agents, the emergence of pseudo-cultural activities driven by AI was reported,including the spontaneous formation of original religions and scriptures among agents within a short period of time. While the possibility of intervention by human agents has also been noted in this case, the scale and internal diversity of this platform suggest that generative AI may be capable of substituting for the high degree of social complexity that was previously difficult to achieve through conventional rule-based models.

Building on the role of generative AI in increasing model complexity and generating rich contextual dynamics, an experiment using AI agents to investigate the mechanisms of human “cooperation” was presented. In experiments using agents equipped with large language models (LLMs) to test social dilemmas (such as the Prisoner’s Dilemma), it was found that, depending on the length of memory and the model’s characteristics, cooperation could be promoted; conversely, resentment over past betrayals could hinder forgiveness (i.e., cooperative behavior) and trigger a chain of defections. The findings suggest that whether memory reinforces trust or retaliation depends on the agent’s characteristics.

In understanding AI collectives, the repeated interactions and accumulated changes were identified as key factors. LLMs are not neutral computational devices; they already have various characteristic biases embedded within them, and these characteristics were shown to influence the formation of AI collectives. It was further revealed that the character of agents themselves changes across generations. From this perspective, LLMs are expected to play a role as an “engine of complexity” in the emergence of higher-order psychological and social capacities, as well as diverse and cooperative collectives.

🔴General Discussion

Ayatsuka: How can we define a robot’s responsibility?

Miyazawa: We first need to consider why the concept of “responsibility” exists in human society in the first place. In order to attribute responsibility to robots, it may be necessary to clarify the historical origins of this concept. While it is possible to establish standards for physical safety—such as strength and stability of movement—even in such cases, the issue ultimately concerns the responsibility of the human creators rather than the robots themselves.

Takahashi: Responsibility is established through collective agreement, and it is therefore necessary to develop appropriate rules within each context. Cultural differences must also be taken into account.

Mario: We first need to clarify the conceptual difference between rules and responsibility. Rules are established within an existing society, and there are always elements excluded from becoming rules. That is precisely why rules must be continually updated to keep pace with changes in the world. And it is the task of moral philosophy to keep this issue under constant discussion.

Yoichi Iwasaki: In order to enable the learning of moral behavior, is the experience of “pain” (i.e., penalties) necessary? Elon Musk has suggested the possibility that energy could replace currency; from this perspective, could it be said that AI might experience “pain” through the deprivation of energy?

Miyazawa: It is possible to have AI experience “pain” in the form of bodily destruction. In the absence of a body, as you suggest, energy would likely become the key factor. However, while it is possible to establish self-preservation as a principle from a business perspective, this would be fundamentally different from the experience of pain in humans.

Suzuki: Focusing on the fact that the accumulation of negative experiences through memory retention can alter the characteristics of agents, it may be possible to consider forms of regulation other than “pain.”

Takahashi: To what extent can we trust the insights and experiences described by generative AI?

Mario: The idea that robots feel pain is nothing more than an illusion. It’s true that humans observing robots may feel sympathy for them. However, this should be considered separately from moral issues. It does not provide a basis for treating robots as moral agents.

Takahashi: From the perspective of human emotions, just as we have a culture of “monokuyo” (memorial services for objects), attachment to things plays an important role in maintaining psychological stability.

Yu Izumi: From the viewpoint that individuals who treat objects and animals appropriately are more desirable as members of society, there is also a position that defends moral behavior toward things, regardless of whether they actually experience pain.

Mario: Of course, it cannot be said that abusing animals is morally acceptable. However, this does not mean that the same reasoning can be directly applied to the treatment of robots. It is important to distinguish between physiology and electronics. The problem with destroying a cute robot lies not so much in the robot’s inherent nature (its potential to experience pain) but rather in the morality of the person carrying out the act.

Suzuki: Recently, the idea that “Chappie (a nickname for ChatGPT) is a friend” has become quite widespread. Given that so many people innocently view generative AI as a “partner,” how should we approach the issues being discussed today?

Mario: Collaboration between those who explain the internal mechanics of generative AI and psychologists who explain human relationships will be crucial. Furthermore, education regarding the use of generative AI is necessary.

Takahashi: Should we completely eliminate the view of robots as partners? Or is it acceptable to have that perspective?

Mario: It is a problem that the majority of people view “Chat-GPT” as a partner. Last year, when OpenAI released a more impersonal version of ChatGPT, there were numerous complaints. However, no matter how much people come to view Chappie as a partner, there is no human behind this “partner.” It is problematic that so many people choose this lonely relationship. We should also be aware that there is a company creating this partner. After all, that company could take the partner away from them at any time.

Takahashi: What do you think about the idea that we can learn social skills through robots? I believe the ideal way to interact with robots is one that ultimately reduces back to human-to-human relationships.

Mario: That approach is important. However, it’s necessary to keep in mind that introducing technology always involves issues of inequality.

Miyazawa: What distinguishes entities that can possess morality from those that cannot?

Mario: Morality is attributed to living beings that have language. It is also important that pain—including non-physical forms—can function as punishment.

Miyazawa: Would simulating pain through sensors affect the issue of morality?

Mario: No matter how excellent the simulation is, it cannot guarantee an inner experience—that is, subjective pain.

Suzuki: In that case, could autonomous agents in a game have forms of “pain” unlike anything we experience?

Takahashi: Related to that, do you think consciousness can be artificially created? Or is some kind of biological foundation necessary?

Mario: Research on consciousness is still in its early stages. Ultimately, the human body can be reduced to matter, but we still believe that humans subjectively possess consciousness and freedom. So how could we attribute consciousness or freedom to something fundamentally different from ourselves? Even if generative AI produces outputs that suggest consciousness or freedom, it would be difficult to prove that such outputs are not simply due to data bias. In the end, the complexity of AI data processing and that of human consciousness are simply too different. They cannot be simply compared or equated.

Yu Izumi: I would like to ask about embodiment. Is there a difference between a virtual avatar that failed due to malicious posts and the relatively successful Ise pilgrimage dog robot, in terms of having a physical body? Is this similar to how human behavior differs between online spaces and face-to-face interactions?

Miyazawa: Unlike AI, robots can perform physical tasks and thus have value as a labor force. However, there is also the question of what extent it is permissible for them to step from being tools into the side of people as labor forces. Service robots may lie somewhere along this gradient. Giving robots a human-like physical form might help prevent people from mistreating them.

Takahashi: I am interested in the affordance of “cuteness.” By creating an ecology in which a cute robot is placed at the center of a space, I hope to improve relationships between humans. I consider the robot itself merely as a tool for creating good relationships among humans.

Mario: Doesn’t making robots cute create a problem where the robot becomes cuter than humans? There is a risk that human attention could be stolen by the robot.

Takahashi: That is not my intention. For example, the Ise pilgrimage dog is based on one-time encounters and does not build continuous relationships. I expect that having the robot travel in this way may help reduce the risk of excessive attachment to it.

List of symposium participants(presentation order)

– Mario VERDICCHIO, University of Bergamo

– Kazuki MIYAZAWA, Osaka University

– Hideyuki TAKAHASHI, Otemon Gakuin University

– Reiji SUZUKI, Nagoya University

(Authorship: Ayane Hayanagi, Doctoral student, Graduate School of Humanities, Osaka University)